Meet Jacques Morin – Sr. Director of Professional Programs & Learner Services

Earlier this year, Jacques Morin joined LILE as our new Senior Director of Professional Programs and Learner Services. This role is unique in that it’s a dual appointment – Jacques …

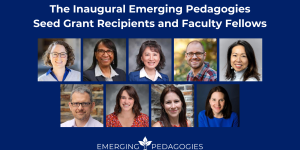

Dr. Candis Watts Smith

Dr. Candis Watts Smith Dr. Sheila Patek

Dr. Sheila Patek Dr. Cecilia Marquez

Dr. Cecilia Marquez